Hi… all today i’m going to share how to crawl a web page with scrapy. See my previous post for installation here.

Its always best to create directory for learning any language or programming.

Open Terminal (cntrl+Alt + T)

mkdir (name of the directory)

cd /directoryname

To create a new project in scrapy

scrapy startproject sample

now change the directory to

cd sample

type the command ls

sample scrapy.cfg

now cd sample

again – ls

_init__.py items.py pipelines.py

settings.py spiders

scrapy created these files within sample(name of project) folder.

If you already know django or python means you can easily understand these . If not don’t worry i will try to explain as much as i can .

- scrapy.cfg: the project configuration file

- sample/: the project’s python module, you’ll later import your code from here.

- sample/items.py: the project’s items file.

- sample/pipelines.py: the project’s pipelines file.

- sample/settings.py: the project’s settings file.

- sample/spiders/: a directory where you’ll later put your spiders.

- __init__.py – to initialize Python Packages and also consider this directory as Python Package.

Now ,it times to get into scrapy and crawl a page

Things we have to for crawling a web page are:

- Define items in items.py

- Write a spider to crawl a page and extract items

- Writing an pipelines.py to store the extracted Items

Things to do in items.py

from scrapy.item import Item, Field

class SampleItem(Item):

# define the fields for your item here like:

# name = Field()

title=Field() // for title of a page

link=Field() // for links of the page

desc=Field() //for description of a page

Things to do with Spiders/

To create Spider Scrapy genspider (name) (domain name) or

simply create one sample.py in spiders folder.

mydomain.py (i created mydomain.py in spiders folder of the project)

from scrapy.spider import BaseSpider

from scrapy.selector import HtmlXPathSelector

from sample.items import SampleItem

class DmozSpider(BaseSpider):

name = “dmoz”

allowed_domains = [“dmoz.org”]

start_urls = [

“http://www.dmoz.org/Computers/Programming/Languages/Python/Books/”,

“http://www.dmoz.org/Computers/Programming/Languages/Python/Resources/”

]

def parse(self, response):

sel = HtmlXPathSelector(response)

sites = sel.select(‘//ul/li’)

items = []

for site in sites:

item = SampleItem()

item[‘title’] = site.select(‘a/text()’).extract()

item[‘link’] = site.select(‘a/@href’).extract()

item[‘desc’] = site.select(‘text()’).extract()

items.append(item)

return items

Lets explain what i did above,

For spiders three things are necessary

- name

- start_url

- parse method

name – must be unique for every page

start_urls – list of urls which we want to crawl

parse – method for extracting data from webpage.

allow_domains – allows pages with given domains and restricts others pages

Thats it .. now run our file and see what’s happening .

To run a spider

scrapy crawl [name of the spider]

so in our code spider name is ‘dmoz’ .

scrapy crawl dmoz

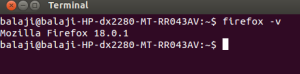

In terminal you can see like this

if your code is correct i mean without any errors you can see Spider opened and crawled and closed like below

2013-11-27 16:09:20+0530 [dmoz] INFO: Spider opened

2013-11-27 16:09:20+0530 [dmoz] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2013-11-27 16:09:20+0530 [scrapy] DEBUG: Telnet console listening on 0.0.0.0:6023

2013-11-27 16:09:20+0530 [scrapy] DEBUG: Web service listening on 0.0.0.0:6080

2013-11-27 16:09:21+0530 [dmoz] DEBUG: Crawled (200) <GET http://www.dmoz.org/Computers/Programming/Languages/Python/Books/> (referer: None)

2013-11-27 16:09:21+0530 [dmoz] DEBUG: Scraped from <200 http://www.dmoz.org/Computers/Programming/Languages/Python/Books/>

{‘desc’: [u’\r\n\r\n ‘],

‘images’: [],

‘link’: [u’/’],

‘title’: [u’Top’]}

2013-11-27 16:09:21+0530 [dmoz] DEBUG: Scraped from <200 http://www.dmoz.org/Computers/Programming/Languages/Python/Books/>

{‘desc’: [], ‘images’: [], ‘link’: [u’/Computers/’], ‘title’: [u’Computers’]}

2013-11-27 16:09:21+0530 [dmoz] DEBUG: Scraped from <200 http://www.dmoz.org/Computers/Programming/Languages/Python/Books/>

{‘desc’: [],

‘images’: [],

‘link’: [u’/Computers/Programming/’],

‘title’: [u’Programming’]}

Want to Store the crawled details as json or csv ?

To store the crawled details as json run this command

scrapy crawl dmoz -o items -t json

now you can see the items.json in your project directory.

Scrapy Shell

Scrapy comes with inbuilt tool for debugging and testing our code and makes developers job easily.

Inside project directory run this command scrapy shell

you can see like this !!

2013-11-27 16:23:04+0530 [scrapy] DEBUG: Web service listening on 0.0.0.0:6080

[s] Available Scrapy objects:

[s] item {}

[s] settings <CrawlerSettings module=<module ‘sample.settings’ from ‘/home/bala/scrapycodes/sample/sample/settings.pyc’>>

[s] Useful shortcuts:

[s] shelp() Shell help (print this help)

[s] fetch(req_or_url) Fetch request (or URL) and update local objects

[s] view(response) View response in a browser

now lets check this

fetch(“http://www.google.co.in”)

2013-11-27 16:25:23+0530 [default] INFO: Spider opened

2013-11-27 16:25:29+0530 [default] DEBUG: Crawled (200) <GET http://www.google.co.in> (referer: None)

[s] Available Scrapy objects:

[s] hxs <HtmlXPathSelector xpath=None data=u'<html itemscope=”” itemtype=”http://sche’>

[s] item {}

[s] request <GET http://www.google.co.in>

[s] response <200 http://www.google.co.in> # Things to note

[s] settings <CrawlerSettings module=<module ‘sample.settings’ from ‘/home/bala/scrapycodes/sample/sample/settings.pyc’>>

[s] spider <BaseSpider ‘default’ at 0x3cde3d0>

[s] Useful shortcuts:

[s] shelp() Shell help (print this help)

[s] fetch(req_or_url) Fetch request (or URL) and update local objects

[s] view(response) View response in a browser

hxs -> html xpath selector

now we have response of the requested url .

lets get the title of the page .

hxs.select(“//title”)

<HtmlXPathSelector xpath=’//title’ data=u'<title>Google</title>’>]

it returns the selector element (with html tags)

we want text of the tag

hxs.select(“//title”).extract()

[u'<title>Google</title>’]

extract -> used to extract element from page

Things to note: Results are in list and u’ represents unicode.

Lets try this !!!

hxs.select(“//title/text()“).extract()[0]

u’Google’

For xpath learning use this Xpath.

Thats it … Happy coding ..